Top 10 AI Powered Cyberattacks to Watch for in 2023

In today’s technology-driven world, artificial intelligence (AI) has become increasingly integrated into our daily lives. While it has undoubtedly brought numerous benefits, it has also opened new doors for cybercriminals to exploit.

AI-powered cyberattacks have become a major threat to businesses and individuals alike. These attacks are becoming more sophisticated, automated, and difficult to detect using traditional security measures. As a result, it has become more important than ever for individuals and organizations to stay vigilant and take proactive steps to protect themselves from these evolving threats.

In this article, we will delve into the top 10 AI-powered cyberattacks to watch for in 2023. We will explore the various ways that cybercriminals are exploiting AI and machine learning (ML) to launch attacks, and provide insights on how to implement AI-based security measures to enhance protection against such threats.

Top 10 AI-Powered Cyberattacks : Threats, Examples & Solutions

In 2023, we can expect to see a rise in AI-powered cyberattacks that are more sophisticated, automated, and effective than ever before. Cybercriminals are constantly finding new ways to exploit AI, and it is becoming increasingly difficult for traditional security measures to detect and prevent these attacks.

Here are the top 10 AI-powered cyberattacks to watch for in 2023:

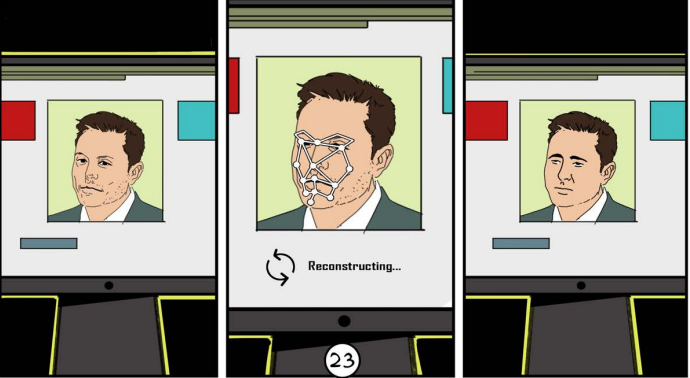

Deepfakes

Deepfakes are a form of AI-powered manipulation where existing images or videos are altered to create realistic but fake media. With deepfake technology, it is now possible to create convincing, fake videos or images that can spread false information and create chaos.

One of the primary concerns with deepfakes is their potential to spread misinformation and cause damage to reputations, institutions, and even democracies. Deepfakes can be used to create fake news or propaganda, manipulate public opinion, or even create blackmail material.

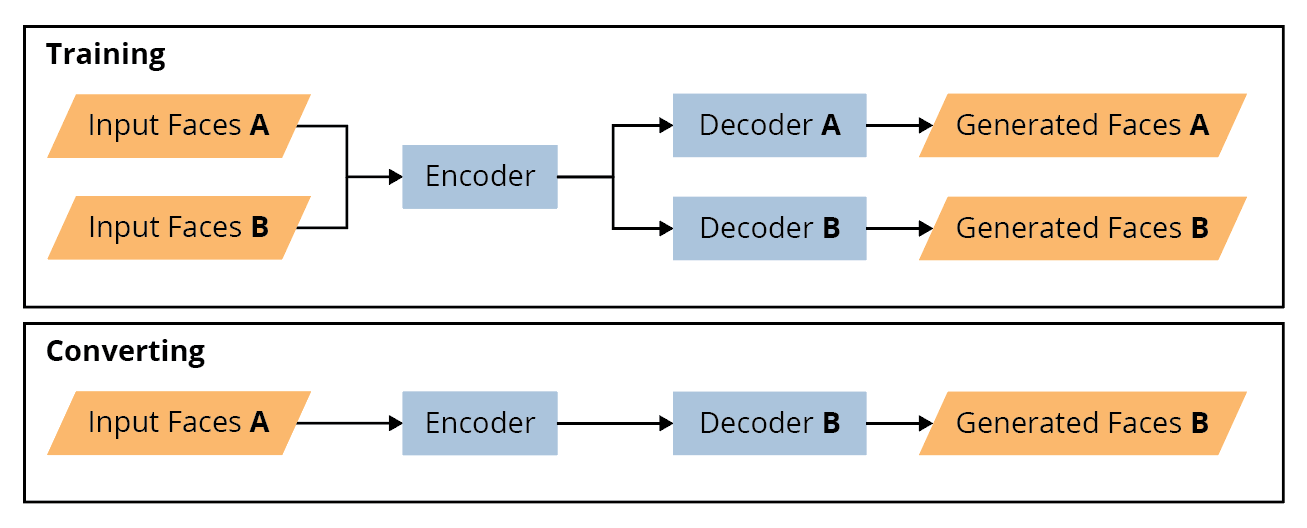

How do Deepfakes work?

Deepfakes are created using machine learning algorithms, specifically deep neural networks, that analyze and learn patterns in large sets of data, such as images or videos. The algorithm then generates a new output based on these patterns, which can be used to alter the original image or video.

One of the most concerning aspects of deepfakes is their potential to deceive viewers into believing they are real. These fake videos or images are often used for malicious purposes such as creating fake pornography or political propaganda.

Live Example

In one real-life example, criminals created a deepfake audio clip of a CEO’s voice and used it to direct an employee to transfer $243,000 to a fraudulent account. The attack was successful, and the capital was never recovered.

Protecting yourself from Deepfakes

Protecting oneself against deepfakes can be challenging, but there are some steps that individuals and organizations can take to limit their impact. It is important to be aware of the existence of deepfakes and be cautious about trusting images or videos found online.

- One approach is to use advanced software tools that can detect deepfakes and distinguish them from real media. These tools can analyze images or videos for inconsistencies and identify subtle changes that suggest tampering.

- In addition, individuals can take precautions, such as verifying the source of the media and cross-checking information with trusted sources. Social media platforms can also play a role by implementing policies and tools to detect and remove deepfake content.

AI Vulnerability Hacking

Hackers are constantly finding new ways to exploit vulnerabilities in software, and one technique they are using increasingly is AI hacking. AI and machine learning tools are being used to run through code and identify vulnerabilities that can be exploited by hackers.

AI hacking tools are becoming more sophisticated, allowing hackers to quickly and accurately identify vulnerabilities that would take human experts much longer to find. These tools use machine learning algorithms to analyze large amounts of code and identify patterns that suggest the presence of a vulnerability. Once a vulnerability is identified, the tool can then generate an exploit that can be used to compromise the system.

Live Example

One real-life example of an AI hacking attack is the breach of the US Department of Defense’s (DoD) travel records system in 2018. Hackers used an AI-powered tool to scan the system for vulnerabilities and then exploited those vulnerabilities to gain access to sensitive travel records of US military and civilian personnel. The breach was discovered in October 2018 and affected over 30,000 records.

How to Protect Yourself from AI Hacking

As AI hacking techniques become more advanced, it’s essential for individuals and organizations to take proactive measures to protect themselves from these attacks. Here are some steps you can take to help safeguard your systems:

- Keep your software up to date: Make sure that you regularly update all software and systems, including security software and firewalls. These updates often include patches and fixes for vulnerabilities that hackers can exploit.

- Use strong passwords: Password guessing is a common technique used by hackers, so it’s essential to use strong, complex passwords and enable two-factor authentication whenever possible.

- Train employees: It’s crucial to educate your employees about the risks of AI-powered attacks, including phishing and other social engineering techniques. Encourage them to be vigilant and cautious when opening emails or clicking on links.

- Monitor your systems: Regularly monitor your systems and networks for any unusual activity, such as unauthorized access attempts or suspicious logins.

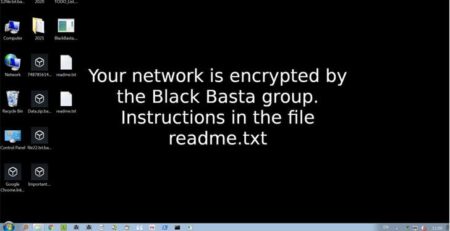

AI Powered Malware

As if traditional malware wasn’t bad enough, cybercriminals are now using artificial intelligence (AI) to create more advanced and sophisticated forms of malware. AI-powered malware is a new and emerging threat that is particularly concerning because it has the ability to learn and adapt to its environment, making it much harder to detect and defend against.

How does AI-powered malware work?

AI-powered malware works by using machine learning algorithms to analyze and identify vulnerabilities in computer systems. Once a vulnerability is identified, the malware can then use this information to exploit the weakness and gain access to the system. The malware can also learn from its environment and adapt its behavior accordingly, making it even more difficult to detect and defend against.

How AI impacts Malware

- AI enables malware to adapt and evolve its tactics based on its environment, making it elusive and challenging to detect.

- AI-powered malware can analyze user behavior, generate convincing phishing emails, and tailor attacks to specific users, increasing the likelihood of success.

- The speed and sophistication of malware attacks is enhanced with AI, by quickly automating tasks that would require significant human effort.

- It enables malware to bypass traditional security measures by mimicking legitimate system behavior and leveraging advanced evasion techniques.

Live Example

One real-life example of AI-powered malware is the DeepLocker malware created by IBM. DeepLocker is a form of AI-powered malware that uses machine learning algorithms to target specific victims and avoid detection by traditional security measures. DeepLocker is designed to only activate when it recognizes specific criteria, such as the victim’s location or voice recognition, making it much harder to detect and defend against.

How to Protect yourself from AI-powered Malware?

Protecting against AI-powered malware requires a multi-faceted approach that combines both technical and non-technical measures. Here are some helpful and unique steps to protect yourself and your organization from AI-powered malware:

- Be wary of suspicious emails: Phishing emails are a common tactic used by malware creators to trick users into downloading or clicking on malicious links. Be cautious of emails that ask you to download attachments or click on links, especially if they come from unknown senders or contain suspicious subject lines.

- Use anti-malware software: Installing anti-malware software can help detect and remove malware from your system. Look for software that includes features such as real-time scanning and automatic updates to stay protected against the latest threats.

- Monitor your network: Monitor your network for unusual activity or traffic that may indicate a malware infection. Use network monitoring tools to detect and respond to threats in real-time, and consider setting up intrusion detection and prevention systems to prevent unauthorized access.

Advanced Persistent Threats (APT)

APTs or Advanced Persistent Threats are a type of cyberattack that are typically carried out by highly skilled and well-funded attackers, such as nation-states or organized criminal groups.

One of the main concerns with APTs is that they are often powered by AI, which makes them even more sophisticated and difficult to detect. APTs using AI can learn and adapt to the target environment, making them more effective and able to bypass traditional security measures.

APTs are designed to infiltrate a system or network, remain undetected for an extended period of time, and steal sensitive information or cause damage to the target.

How do APTs work?

APTs work by using various techniques to gain access to a network or system, such as spear-phishing or exploiting vulnerabilities in software. Once they gain access, they use a combination of social engineering tactics and advanced techniques to remain undetected, such as using encrypted communication channels or hiding in legitimate network traffic.

Live Example

One real-life example of an APT attack was the 2017 Equifax data breach. In this attack, hackers gained access to Equifax’s systems and remained undetected for over two months. They stole personal information such as names, social security numbers, and birth dates of 147 million people. The attackers were reportedly nation-state sponsored and used a combination of traditional hacking techniques and AI-powered tactics to carry out the attack.

AI-Based DDoS Attacks

Distributed Denial of Service (DDoS) attacks are a common form of cyberattack that aims to overwhelm a website or online service with traffic, rendering it inaccessible to users. In recent years, there has been an increase in the number of AI-powered DDoS attacks, which use machine learning algorithms to launch more sophisticated and effective attacks.

How do AI-based DDoS attacks work?

AI-based DDoS attacks work by using machine learning algorithms to study and understand the target website or service’s infrastructure, traffic patterns, and weaknesses. This allows the attackers to launch more targeted and effective attacks, often using multiple attack vectors simultaneously. AI-powered attacks can also adapt and change their tactics in real-time, making them more difficult to detect and defend against.

Live Example

One example of a DDoS attack was the 2016 attack on Dyn, a DNS provider that was used by major websites such as Twitter, Reddit, and Netflix. The attackers used a botnet made up of compromised Internet of Things (IoT) devices, such as cameras and routers, to launch a massive DDoS attack that disrupted access to these websites for several hours.

The Dyn attack was considered one of the largest DDoS attacks in history, and if cybercriminals had used AI for botnet efficiency and IoT device penetration, the damage would’ve been at a larger magnitude.

How to protect yourself from AI-based DDoS attacks

To protect against AI-based DDoS attacks, organizations can take several steps.

- First, they can implement advanced DDoS protection and mitigation services that use machine learning and other AI-based techniques to detect and block attacks.

- Additionally, organizations should regularly monitor their network traffic and look for unusual spikes or patterns that could indicate an attack is underway.

- Another important step is to ensure that all devices and systems connected to the network are secure and up-to-date with the latest security patches. This includes IoT devices, which are often vulnerable to exploitation and compromise.

- Finally, organizations should develop and test a comprehensive incident response plan that outlines the steps to take in the event of a DDoS attack, including how to restore service and minimize damage.

Machine Learning Poisoning

Machine learning poisoning is a type of attack that targets the training data used to build machine learning models. It involves introducing malicious data into the training set, which can then result in the model producing incorrect or harmful results.

Machine learning poisoning attacks can be carried out by both internal and external threat actors, and can have significant consequences for businesses and organizations that rely on machine learning models for critical decision-making processes.

How does machine learning poisoning work?

Machine learning models are trained using large amounts of data, which is used to identify patterns and make predictions. A machine learning poisoning attack involves manipulating this data in a way that causes the model to produce incorrect or harmful results. This can be achieved by introducing small amounts of malicious data into the training set, which can then be amplified during the training process.

How to protect against machine learning poisoning attacks

Protecting against machine learning poisoning attacks can be challenging, as they can be difficult to detect and mitigate. However, there are several steps that organizations can take to reduce the risk of a successful attack.

- First, organizations should implement strict controls around the data used to train machine learning models. This can include monitoring the sources of data, verifying data integrity, and implementing data access controls to limit the ability of attackers to manipulate data.

- Second, organizations should ensure that machine learning models are regularly tested and validated to identify any unexpected behavior or inaccuracies. Additionally, implementing monitoring and anomaly detection tools can help identify any unusual activity that may be indicative of a poisoning attack.

- Lastly, organizations should invest in security training for employees involved in the development and use of machine learning models. This training can help employees identify and report suspicious activity, as well as understand the potential consequences of a successful machine learning poisoning attack.

Password Guessing

Password guessing is a type of cyberattack where an attacker attempts to gain unauthorized access to a system or account by guessing the password. This attack is usually done using automated tools that try a large number of commonly used passwords and dictionary words.

One of the main concerns with password guessing attacks is that they can be powered by AI and machine learning algorithms, which makes them more effective and harder to detect. These tools can use data from previous data breaches to create more accurate lists of commonly used passwords and can also learn from successful login attempts.

Live Example

One real-life example of how password guessing can cause damage is the LinkedIn data breach in 2012. In this attack, cybercriminals gained access to over 167 million user accounts by guessing weak passwords. The data was then sold on the dark web, leading to a spike in credential stuffing attacks, where cybercriminals use automated tools to attempt to log in to user accounts using stolen credentials.

How to Protect Yourself from Password Guessing Attacks

- To protect against password guessing attacks, individuals and organizations should follow best practices for creating strong passwords, such as using a mix of letters, numbers, and symbols and avoiding easily guessable information such as birthdays or names.

- Implementing two-factor authentication can also significantly reduce the risk of password guessing attacks by requiring a second form of verification, such as a code sent to a mobile device or biometric authentication.

- Additionally, regularly monitoring login attempts and enabling account lockouts after a certain number of failed attempts can help detect and prevent password guessing attacks. Educating users on how to identify and avoid phishing scams and other social engineering tactics can also help mitigate the risk of successful password guessing attacks.

Human Impersonation

Human impersonation is a type of cyberattack that involves an attacker disguising themselves as a legitimate user in order to gain access to sensitive information or systems. This can be done through various means, such as phishing scams, social engineering tactics, or even using deepfake technology to create convincing fake videos or audio recordings.

The risks of human impersonation attacks are significant, as attackers can use this technique to gain access to sensitive information or systems, steal identities, or carry out fraudulent activities. These attacks can also cause reputational damage to individuals or organizations, as fake information or malicious content can be spread online.

Live Example

One real-life example of a human impersonation attack is the 2020 Twitter hack. In this attack, attackers were able to gain access to high-profile Twitter accounts, including those of Elon Musk, Bill Gates, and Barack Obama. They then used these accounts to post tweets promoting a bitcoin scam, which resulted in over $100,000 in losses.

The attackers used social engineering tactics to trick Twitter employees into giving them access to internal tools, which they then used to take over the accounts. They also used AI-powered tools to create convincing fake profiles to further manipulate their victims.

Protecting Against Human Impersonation Attacks

There are several steps that individuals and organizations can take to protect against human impersonation attacks.

- First and foremost, it is important to remain vigilant and skeptical of unsolicited requests or messages, especially those that ask for sensitive information or access to systems.

- Individuals can also take steps to protect their online identities, such as regularly monitoring their social media profiles for fake accounts and using strong, unique passwords for each account.

- Organizations can implement security measures such as multi-factor authentication, intrusion detection systems, and employee training programs to identify and report suspicious activity.

- Additionally, using AI-powered tools to detect and prevent human impersonation attacks is becoming more common. These tools can analyze patterns of behavior, language, and other characteristics to identify potential impersonators and alert individuals or organizations to the risk.

AI-Powered Social Engineering

Social engineering is the art of manipulating people into divulging confidential information or performing actions that may not be in their best interest. Cybercriminals have been using social engineering techniques for a long time, and with the advancements in artificial intelligence (AI), these attacks have become even more effective.

AI-powered social engineering involves using machine learning algorithms to analyze social media posts, public data, and other online activity to create a profile of a target individual. This profile can then be used to craft highly personalized and convincing phishing emails or other forms of social engineering attacks. These attacks are often so convincing that even security-savvy individuals can fall victim to them.

Protecting Yourself from AI-Powered Social Engineering

To protect yourself from AI-powered social engineering attacks, it’s important to be vigilant and to take the following steps:

- Be cautious of unsolicited emails, even if they appear to be from a trusted source.

- Always verify the authenticity of an email or message by double-checking the sender’s address and examining the message for any signs of manipulation or impersonation.

- Regularly review your social media settings and adjust your privacy settings to limit the amount of personal information that is publicly visible.

Conclusion

AI has become an increasingly powerful tool in cybersecurity, with the potential to both protect and harm organizations. Like any tool, AI can be exploited by cybercriminals for nefarious purposes, or used by organizations to enhance their cybersecurity defenses.

It is essential that organizations take proactive measures to integrate AI into their cybersecurity strategies, and implement best practices to safeguard against potential vulnerabilities. This includes implementing multi-factor authentication, encrypting sensitive data, regularly updating and patching software, and educating employees on the importance of cybersecurity and how to identify and avoid phishing attacks.

In addition, it is crucial that organizations stay up to date with the latest AI-powered threats and continuously evaluate their cybersecurity posture to identify and mitigate potential risks. By taking these proactive steps and using AI in a responsible manner, organizations can better protect themselves against cyber threats and safeguard their valuable assets and data.